My colleague Christopher Ahlberg, CEO of Recorded Future, recently got in touch to share some exciting news. We had discussed our shared interests a while back at Harvard University. It was clear then that his ideas and existing technologies were very closely aligned to those we were pursuing with Ushahidi’s Swift River platform. I’m thrilled that he has been able to accomplish a lot since we last spoke. His exciting update is captured in this excellent co-authored study entitled “Detecting Emergent Conflicts Through Web Mining and Visualization” which is available here as a PDF.

The study combines almost all of my core interests: crisis mapping, conflict early warning, conflict analysis, digital activism, pattern recognition, natural language processing, machine learning, data visualization, etc. The study describes a semi-automatic system which automatically collects information from pre-specified sources and then applies linguistic analysis to user-specified extract events and entities, i.e., structured data for quantitative analysis.

Natural Language Processing (NLP) and event-data extraction applied to crisis monitoring and analysis is of course nothing new. Back in 2004-2005, I worked for a company that was at the cutting edge of this field vis-a-vis conflict early warning. (The company subsequently joined the Integrated Conflict Early Warning System (ICEWS) consortium supported by DARPA). Just a year later, Larry Brilliant told TED 2006 how the Global Public Health Information Net-work (GPHIN) had leveraged NLP and machine learning to detect an outbreak of SARS 3 months before the WHO. I blogged about this, Global Incident Map, European Media Monitor (EMM), Havaria, HealthMap and Crimson Hexagon back in 2008. Most recently, my colleague Kalev Leetaru showed how applying NLP to historical data could have predicted the Arab Spring. Each of these initiatives represents an important effort in leveraging NLP and machine learning for early detection of events of interest.

The RecordedFuture system works as follows. A user first selects a set of data sources (websites, RSS feeds, etc) and determines the rate at which to update the data. Next, the user chooses one or several existing “extractors” to find specific entities and events (or constructs a new type). Finally, a taxonomy is selected to specify exactly how the data is to be grouped. The data is then automatically harvested and passed through a linguistics analyzer which extracts useful information such as event types, names, dates, and places. Finally, the reports are clustered and visualized on a crisis map, in this case using an Ushahidi platform. This allows for all kinds of other datasets to be imported, compared and analyzed, such as high resolution satellite imagery and crowdsourced data.

A key feature of the RecordedFuture system is that extracts and estimates the time for the event described rather than the publication time of the newspaper article parsed, for example. As such, the harvested data can include both historic and future events.

In sum, the RecordedFuture system is composed of the following five features as described in the study:

1. Harvesting: a process in which text documents are retrieved from various sources and stored in the database. The documents are stored for long-term if permitted by terms of use and IPR legislation, otherwise they are only stored temporarily for the needed analysis.

2. Linguistic analysis: the process in which the retrieved texts are analyzed in order to extract entities, events, time and location, etc. In contrast to other components, the linguistic analysis is language dependent.

3. Refinement: additional information can be obtained in this process by synonym detection, ontology analysis, and sentiment analysis.

4. Data analysis: application of statistical and AI-based models such as Hidden Markov Models (HMMs) and Artificial Neural Networks (ANNs) to generate predictions about the future and detect anomalies in the data.

5. User experience: a web interface for ordinary users to interact with, and an API for interfacing to other systems.

The authors ran a pilot that “manually” integrated the RecordedFuture system with the Ushahidi platform. The result is depicted in the figure below. In the future, the authors plan to automate the creation of reports on the Ushahidi platform via the RecordedFuture system. Intriguingly, the authors chose to focus on protest events to demo their Ushahidi-coupled system. Why is this intriguing? Because my dissertation analyzed whether access to new information and communication technologies (ICTs) are statistically significant predictors of protest events in repressive states. Moreover, the protest data I used in my econometric analysis came from an automated NLP algorithm that parsed Reuters Newswires.

Using RecordedFuture, the authors extracted some 6,000 protest event-data for Quarter 1 of 2011. These events were identified and harvested using a “trained protest extractor” constructed using the system’s event extractor frame-work. Note that many of the 6,000 events are duplicates because they are the same events but reported by different forces. Not surprisingly, Christopher and team plan to develop a duplicate detection algorithm that will also double as a triangulation & veracity scoring feature. I would be particularly interested to see them do this kind of triangulation and validation of crowdsourced data on the fly.

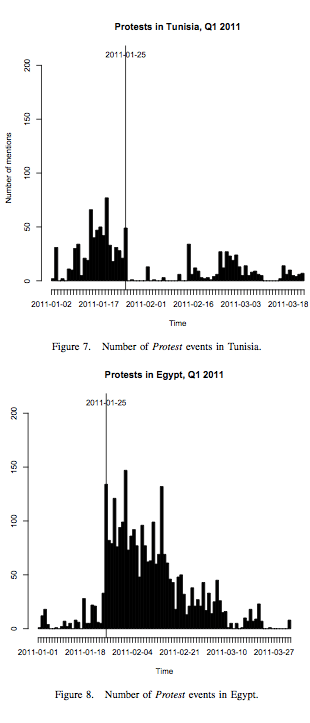

Below are the protest events picked up by RecordedFuture for both Tunisia and Egypt. From these two figures, it is possible to see how the Tunisian protests preceded those in Egypt.

The authors argue that if the platform had been set up earlier this year, a user would have seen the sudden rise in the number of protests in Egypt. However, the authors acknowledge that their data is a function of media interest and attention—the same issue I had with my dissertation. One way to overcome this challenge might be by complementing the harvested reports with crowdsourced data from social media and Crowdmap.

The authors argue that if the platform had been set up earlier this year, a user would have seen the sudden rise in the number of protests in Egypt. However, the authors acknowledge that their data is a function of media interest and attention—the same issue I had with my dissertation. One way to overcome this challenge might be by complementing the harvested reports with crowdsourced data from social media and Crowdmap.

In the future, the authors plan to have the system auto-detect major changes in trends and to add support for the analysis of media in languages beyond English. They also plan to test the reliability and accuracy of their conflict early warning algorithm by comparing their forecasts of historical data with existing conflict data sets. I have several ideas of my own about next steps and look forward to speaking with Christopher’s team about ways to collaborate.

I wonder if you should also webmine agricultural and financial texts and catch early when harvests were failing, food prices rising which I believe were catalysts for many of the protests.

If could predict when stocks move could make some money : )

Patrick, I am working on a simmilar project. Even better, I’m in the DC area. If you have time for a skype chat some time, I would like to see if I could not build on some of this research. My particular interest is the nexus between secure communications, synchronizing events (“black swans”), and relative depravation. I’m in the prelim research phase for a thesis and frequently come back to works you refernce. I would appreciate any time you could spare.

Jeremy

@5nChange

Thanks Jeremy, would be great to chat. My email: patrick@iRevolution.net

Pingback: Mining the Social Media data stream | idisaster 2.0

Patrick, great blog post and thanks for the link to the paper. This looks like a pretty exciting approach for developing early warning mechanisms for predefined profiles. If you have interest in speaking on this at the next Tech@State event (Feb 3-4) on real-time awareness, let us know.

Thanks very much, Noel. Happy to talk on real-time awareness, should be in town then.

Pingback: Crisis detection | Mynafranklinfo

Pingback: Big Data Philanthropy for Humanitarian Response | iRevolution