It is time for a new chapter of WeRobotics to begin. This next stretch of the journey must include more diverse executive leadership. I’m thus stepping down as Executive Director of WeRobotics.* I feel deeply about this personal and professional decision, and didn’t come to it lightly or quickly. Thankfully, the WeRobotics Board has given me their full backing. With this next step, we can continue to walk the talk on diversity, equity, inclusion, localization and shift the power. Equally importantly, this new chapter presents all Flying Labs with a positive opportunity to shape the governance of WeRobotics itself.

Flying Labs are independent, locally-led knowledge hubs that combine local leadership and expertise with emerging technologies to drive positive and sustainable social impact. They’re co-created with WeRobotics but hosted and run by locally-owned organizations, companies, and/or social enterprises. WeRobotics serves as the primary enabler of the Flying Labs Network.

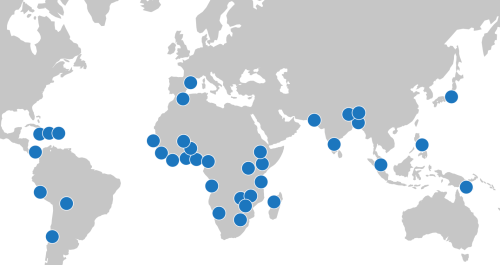

I want to express my deepest gratitude to all of you who worked with us to expand the power of locally-led action over the past 7+ years. Together, we’ve significantly expanded the quality and quantity of locally-led opportunities across multiple sectors. We also built greater respect and more robust demand for local leadership, ownership, knowledge, and expertise. How? By co-creating and co-implementing a radical decentralization and localization model with a wide range of Flying Labs in nearly 40 countries. The collective impact of this model speaks for itself.

We’ve accomplished a lot together. I can’t list every single example here, so will just share a few key accomplishments that mean a lot to me given my values, interests, and direct contributions. While I was largely responsible for catalyzing, championing, and/or coordinating the efforts below, it took our outstanding and purpose-driven teammates at WeRobotics and across the Flying Labs Network to refine these efforts, improve and extend them, and to translate them into direct, meaningful impact. We also relied on strong external partners, donors, dedicated Board Members and phenomenal interns. This was a true team effort in every sense of the word. As we all know, the myth of the lone leader is pure fantasy.

In 2015, one of my WeRobotics Co-Founders — Dr. Andrew Schroeder — and I launched the first-ever program dedicated to the locally-led use of drones for disaster management (AidRobotics). Together with many Flying Labs, we built the World Food Program’s (WFP) own institutional expertise in this space over multiple years. This included WeRobotics and Flying Labs leading half-a-dozen hands-on professional trainings for country teams in Africa, Asia, Latin America, and the Caribbean, not to mention with other UN agencies, from Malawi to the Maldives. Since then, Flying Labs have led their own trainings and operational deployments in response to a wide range of disasters across the globe. What’s more, we were amongst the first to apply machine learning and AI to automate the analysis of drone imagery (building on earlier work done at QCRI). I also launched a professional, peer-reviewed online training on the use of drones in humanitarian action, the only course of its kind.

AidRobotics was our foundational and single most active program during the first critical years of WeRobotics. In fact, this program played an instrumental role in defining WeRobotics’ values, model and mission. So it’s worth expanding on this. AidRobotics was strongly influenced by UAViators, a global professional network and community of practice I founded in 2013 with an explicit focus on localization, ethics, and best practices. In fact, the initial decentralization idea of Flying Labs actually originated from UAViators. This also explains why Nepal Flying Labs (the first Flying Labs) predates WeRobotics by well over a year, and why the first Flying Labs projects were implemented in partnership with UAViators. Our joint learnings in Nepal later informed the launch of this digital solution to coordinate drone flights in disasters.

In fact, the entire AidRobotics Program — including the Code of Conduct, training expertise, and our deployment experience — was a direct spinoff from the open collaborations at the heart of the UAViators community. We brought to WeRobotics our strong interest in localization and locally-led action thanks to this early operational and policy engagement. We also brought our core values and a strong commitment to decentralization and locally-led action. See the section “From UAViators to WeRobotics” in this peer-reviewed publication.

The foundational work through UAViators served to catalyze the co-creation of the Flying Labs Network, which has successfully expanded the space for locally-led action in the use of emerging technologies for social impact. So the Flying Labs Network feels like the pinnacle of a long journey from when I first began working on localization and people-centered projects in 2006, within the context of early warning and response systems in humanitarian emergencies. On the tech side, I’ve been working in humanitarian technology since co-founding and co-directing the Harvard Humanitarian Initiative’s (HHI) Program on Crisis Mapping in 2007. The first time I wrote about the use of drones in humanitarian action was in 2008.

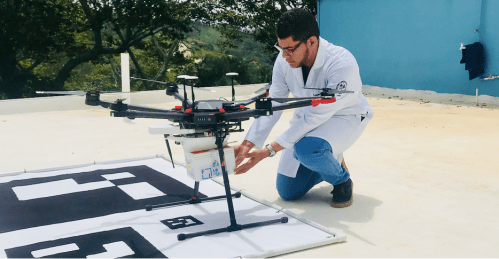

In 2016, we teamed up with Peru Flying Labs to launch the first-ever program dedicated to the locally-led use of drones for medical delivery (HealthRobotics). Peru Flying Labs initiated this program through an explicit request to explore the possibilities of medical drone delivery in the Amazon Rainforest. To date, WeRobotics and several different Flying Labs have carried out more locally-driven drone delivery trainings and projects in more countries than any other organization or company thanks to our strategic partnerships with WHO, the CDC, Gates Foundation, Pfizer, Johnson & Johnson, and BD, along with multiple Ministries of Health, hospitals, clinics, doctors, nurses, and patients in Africa, Asia, Latin America, the Caribbean, and the South Pacific. Furthermore, we made drone delivery far more accessible than any other organization.

What’s more, thanks to the leadership of Flying Labs, we were the first to enable locally-led cargo drone deliveries in Peru, Dominican Republic, Nepal, Papua New Guinea, Uganda, and the Philippines, among others. We also enabled large-scale locally-led deliveries in Madagascar. To share our learnings, I launched a professional, peer-reviewed online training on using cargo drones in health. This is still the only ongoing course of its kind. Like the AidRobotics course, it was peer-reviewed by MIT, UPenn and Direct Relief experts.

In 2017, we collectively launched and grew our dedicated engineering team to make cargo drones far more accessible to Flying Labs, and to offer Flying Labs both in-house add-on technology to use drones in a broader range of social good applications. The purpose of doing so was to enable Flying Labs to become first-movers in their own countries, as opposed to foreign companies and consultants who often parachute in with little local knowledge or interest in local ownership.

Our engineering expertise enabled both WeRobotics and Flying Labs to explore novel drone applications, including the precision release of beneficial mosquitoes to eliminate Dengue and Zika; Ladybugs to protect pecan trees and Mangrove seeds for climate change mitigation. The level of expertise needed to design and build these autonomous and drone-optimized release systems was considerable. Some members of the WeRobotics engineering team have since created a spinoff (formerly called Release Labs) to pursue related opportunities in the social impact space. I’m proud to have played a long and instrumental role in incubating this climate tech startup.

In 2018, we fully democratized the Flying Labs Network, enabling qualified local organizations worldwide to join the Network. We co-created a localization model with all the required guidelines and governance mechanisms to respond to the priorities and interests of local organizations. This development was important to me because of my strong interest in locally-led action and decentralization prior to WeRobotics. Fellow Co-Founder Andrew hasn’t received enough public credit for helping to shape this democratization and decentralization model, which paved the way for the Flying Labs Network to become a social movement dedicated to The Power of Local. This model ultimately enabled the Network to grow from three Flying Labs in 2018 to nearly 40 in 2023 (despite the devastating multi-year pandemic in between). You can read more about the model and its applications to other sectors here. Another proud accomplishment of 2018 was the launch of our Online Training Academy!

In 2019, we launched a new dedicated program to engage youth directly (YouthRobotics). WeRobotics and Flying Labs were the first to carry out hands-on youth trainings and projects in dozens of countries. These locally-led projects included aerial, terrestrial, and marine robotics. I initially took the lead in this program and secured our first funding for STEM projects. Together with multiple colleagues, we subsequently had the opportunity to co-implement these first activities in the South Pacific. This opened the door for many STEM projects that followed. As part of the YouthRobotics Program, we also teamed up with Flying Labs to co-create the first-ever picture book for children that is explicitly geared towards the importance of local knowledge, leadership, and ownership when it comes to the use of emerging technologies for social good projects. There are plans to turn this into a book series with Flying Labs.

It’s worth noting that the three most active and impactful operational programs at WeRobotics over the past 7+ years have been the AidRobotics, HealthRobotics, and YouthRobotics Programs. This is all thanks to the dedicated WeRobotics and Flying Labs Teams who took these programs to the next level. There are many more accomplishments to write about within each of these three programs, so perhaps another book is in order!

In 2020, with the COVID outbreak, I led the launch of this dedicated campaign to directly inform the appropriate use of drone technology in response to the pandemic. That same year, following our public commitment to anti-racism, I catalyzed our efforts to diversify our Board, shift our communications strategy and make the WeRobotics Team more inclusive. I’m very proud that we successfully accomplished each of the goals in our public commitment thanks to a huge team effort. I later led the launch of this shift-the-power series to document our concrete steps in shifting power with local organizations. All these efforts were central to our organizational transformation. In addition, we launched the Flying Council with Flying Labs to accelerate our Stopping-as-Success explorations. I’m a western white male who works hard to understand and reflect on my privileged role and how to transform individually. This position of power can have an impact on organizations, including WeRobotics and Flying Labs. I recognize that shifting the power is a continuous and hard-fought journey, and still have a lot to learn.

In 2021, we teamed up with multiple Flying Labs to fully document our joint localization and shift-the-power model, which we first began co-creating with Flying Labs in 2018. Why? Because the model was simply not getting enough visibility in policy circles, or influencing mainstream discussions on localization. We also wanted to make the model more accessible for others to adapt and adopt. So I took the lead from the WeRobotics side by working closely with many Flying Labs. As always, their insights were considerable and their input invaluable. The applied research and writing took over five months. Once completed, we launched this detailed report on our decentralization model at the Skoll World Forum to demonstrate and explain the model’s success.

The co-creation of this model will undoubtedly remain one of my proudest accomplishments at WeRobotics. We also used the high-profile Skoll event to formally launch the Power Footprint Project, which I’m also very passionate about. And we fully updated our Shift-the-Power strategy, along with the impact pages of WeRobotics and Flying Labs.

In 2022, following another successful independent audit, we publicly confirmed that in 2021, WeRobotics transferred 42% of its own revenue and funding to local organizations. The industry average in the humanitarian and development space is typically 2-3%. This makes us one of the few international nonprofit organizations worldwide to accomplish such high levels of equity. We did this by walking the talk; by using our co-created localization model that clearly places local organizations first, along with local leadership, ownership, and expertise. In 2022, we also launched this dedicated call for the Power Footprint Project. The Board is exploring how best to move this project forward.

During the second half of 2022, I worked closely with colleagues to initiate necessary organizational improvements in terms of Board oversight, governance, decision-making, executive performance reviews, accountability mechanisms, and more. I proactively reached out to the Board on this, working directly with them — and with the Head of Human Resources and Head of Finance — to ensure that WeRobotics stands on solid institutional foundations for the future. This essential work took up 120% of my own time between June and October 2022; groundwork that should enable WeRobotics to be more in line with institutional best practices in 2023. These organizational improvements are among the most important contributions I’ve made at WeRobotics. Leading a transformation agenda can be complex and result in burnout.

On the funding front, we successfully secured support from innovative partners who strongly believed in our mission throughout the years. This includes — but is certainly not limited to — The Rockefeller Foundation, Hewlett Foundation, Gates Foundation, Autodesk Foundation, Jansen Foundation, Atlassian Foundation, Fondation Botnar, Omidyar Network, Twilio Foundation, PagerDuty, MIT Solve, multiple United Nations Agencies, World Bank, Inter-American Development Bank (IADB), USAID, Australia’s Department of Foreign Affairs and Trade (DFAT), BD, Pfizer, Johnson & Johnson, and more.

There’s definitely a lot more that I’m proud of, such as our 100% success rate in passing all of our rigorous and independent audits; the many technology partnerships we’ve secured; leading our expansion into both marine robotics and terrestrial robotics; the Social Ripples systems change project; and our new and improved impact monitoring framework. Not to mention many other essential accomplishments that I wasn’t involved in, such as locally-led drone certification courses, WeShare — our knowledge sharing platform built with Flying Labs; the Labs’ Global Model; the launch of Labs Use-Cases; and many more projects featured on the WeRobotics and Flying Labs blogs, and in our Annual Reports.

To conclude, the most crucial point to take away is this: the enormous team efforts across both WeRobotics and the Flying Labs Network made all the above accomplishments possible and successful.

I’m excited about the next chapter of WeRobotics and Flying Labs. The Flying Labs Network is expected to grow to well over 40 Flying Labs in 2023. There simply is no other network quite like this one. Flying Labs are already training each other and implementing joint projects with each other. This trend will increase substantially, resulting in even more network effects. As I remind all my Flying Labs colleagues during our retreats over the years: “You are each other’s single best resource!”

Whoever becomes the next Executive Director of WeRobotics matters a lot to Flying Labs. So the WeRobotics Board will reach out to all Labs to invite their nominations for strong leadership candidates who are fully committed to our core values. This new chapter is a big positive opportunity for Flying Labs to shape the governance of WeRobotics itself. While change is never easy, the benefits are clear. The significant value-add of greater diversity in team leadership is very well proven. More diverse leadership at WeRobotics will also enable Flying Labs to gain greater access to new funding opportunities.

And don’t forget that WeRobotics has a strong Alumni Network! For example, Joseph (former Head of Drone Data and Systems); Jürg (former Head of Engineering); Seb (former Lead Engineer), and also Cameron (former Lead Engineer), amongst others, all joined the Alumni Network in the past 10 months. What’s more, the Head of HR is joining the network in the coming months, as is the Head of Finance. So WeRobotics has top-notch alumni to draw on. In fact, several alumni have already supported multiple colleagues at Flying Labs and WeRobotics. I pledge to do the same.

When the time is right, I’ll publish a blog post to share the most important professional and personal insights I’ve gained while at WeRobotics, along with the most important lessons learned as executive director during the past 7+ years. This will include my first-hand experience and lessons learned working with a Board. I hope that sharing my learnings will be of value to others. It is essential to me that we live up to our core values externally and internally.

It was an incredible honor and privilege to serve as the official director of this organization.* What I’ll miss the most is my dear colleagues at WeRobotics and Flying Labs; their compassion, kindness, brilliance, dedication and humor. We laughed a lot during our recent Flying Labs Retreat in Nairobi, and we cried (happy tears of gratitude), shared meals, sang, listened to powerful poetry, and even danced. It was good for the soul, as were the many in-person hugs and the energy, inspiration, determination, and brilliance that Flying Labs colleagues brought to the many discussions. I’ll miss this Flying Labs magic, the Power of Local. So I look forward to following their good work.

In sum, I am deeply grateful to everyone who made the above contributions possible and more impactful. You all know who you are. You were there, time and time again, to expand the space for locally-led action. I’ll be forever grateful to you. Lastly, and equally importantly, I want to explicitly recognize and thank each of my colleagues for their proudest accomplishments at WeRobotics and across the Flying Labs Network. Keep shining!

Per Aspera ad Astra.

Through adversity to the stars.

* The WeRobotics Board of Directors did not approve the Co-CEO titles, which is why I’m using the approved title of ED.