Sigh indeed. Yawn, even.

The purpose of this series is not to make it about Paul and Patrick. That’s boring as heck. The idea behind the series was not simply to provoke and use humorous analogies but to dispel confusion about crowdsourcing and thereby provide a more informed understanding of this methodology. I fear this is getting completely lost.

Recall that it was a humanitarian colleague who came up with the label “Crowd Sorcerer”. It made me laugh so I figured we’d have a little fun by using the label Muggle in return. But that’s all it is, good fun. And of course many humanitarians see eye to eye with the Crowd Sorcerer approach, so apologies to those who felt they were wrongly placed in the Muggle category. We’ll use the Sorting Hat next time.

This is not about a division between Crowd Sorcerers and Muggles. As a colleague recently noted, “the line lies somewhere else, between effective implementation of new tools and methodologies versus traditional ways of collecting crisis information.” There are plenty of humanitarians who see value in trying out new approaches. Of course, there are some who simply say “No We Can’t, No We Won’t.”

There’s no point going back and forth with Paul on every one of his issues because many of these have actually little to do with crowdsourcing and more to do with him being provoked. In this post, I’m going to stick to the debate about the in’s and out’s of crowdsourcing in humanitarian response.

On Verification

Muggle Master: And of course the way in which Patrick interprets those words bears little relation to what those words actually said, which is this: “Unless there are field personnel providing “ground truth” data, consumers will never have reliable information upon which to build decision support products.”

I disagree. Again, the traditional mindset here is that unless you have field personnel (your own people) in charge, then there is no way to get accurate information. This implies that the disaster affected populations are all liars, which is clearly untrue.

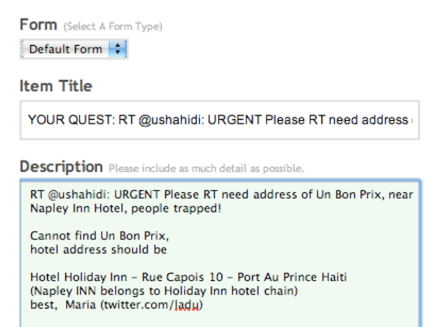

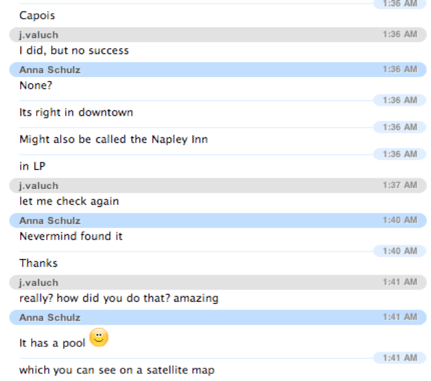

Verification is of course important—no one said the contrary. Why would Ushahidi be dedicating time and resources to the Swift platform if the group didn’t think that verification was important.

The reality here is that verification is not always possible regardless of which methodology is employed. So it boils down to this: is having information that is not immediately verified better than having no information at all? If your answer is yes or “it depends”, then you’re probably a Crowd Sorcerer. If your answer is, “lets try to test some innovative ways to make rapid verification possible,” then again, you likely are a Crowd Sorcerer/ette.

Incidentally, no one I know has advocated for the use of crowdsourced data at the expense of any other information. Crowd Sorcerers and (many humanitarians) are simply suggesting that it be considered one of multiple feeds. Also, as I’ve argued before, a combined approach of bounded and unbounded crowdsourcing is the way to go.

On Impact Evaluation

The Fletcher Team has commissioned an independent evaluation of the Ushahidi deployment in Haiti to go beyond the informal testimonies of success provided by first responders. This is a four-week evaluation lead by Dr. Nancy Mock, a seasoned humanitarian and M&E expert with over 30 years of experience in the humanitarian and development field.

Nathan Morrow will be working directly with Nancy. Nathan is a geographer who has worked extensively on humanitarian and development information systems. He is a member of the European Evaluation Society and like Nancy a member of the American Evaluation Association. Nathan and Nancy will be aided by a public health student who has several years of experience in community development in Haiti and is a fluent Haitian Creole speaker.

The evaluation team has already gone through much of the data and been in touch with many of the first responders as well as other partners. Their job is to do as rigorous an evaluation as possible and do this fully transparently. Nancy plans to present her findings publicly at the 2010 Crisis Mappers Conference where we’ve dedicated a roundtable to reviewing these findings, as well as other reviews.

As for background, the ToR (available here) was drafted by graduate students specializing in M&E and reviewed closely by Professor Cheyanne Church, who teaches advanced graduate courses on M&E. She is considered a leading expert on the subject. The ToR was then shared on a number of listserves including the ReliefWeb, CrisisMappers Group and Pelican (a listserve for professional evaluators).

Nancy and Nathan are both experienced in the method known as utilization-focused evaluation (UFE), an approach chosen by The Fletcher Team to ensure that the evaluation is useful to all primary users as well as the humanitarian field. The UFE approach means that the ToR is a living document and being adapted as necessary by the evaluators to ensure that the information gathered is useful and actionable, not just interesting.

We don’t have anything to hide here, Muggles. This was a complete first in terms of live crisis mapping and mobile crowdsourcing. Unlike the humanitarian community, we weren’t prepared at all, nor trained, nor had prior experience with live crisis mapping and mobile crowdsourcing, nor with the use of crowdsourcing for near real-time translation, nor with managing hundreds of unpaid volunteers, nor did the vast majority of them have any background in disaster response, nor were most able to focus on this full time because of their under/graduate coursework and mid-term exams, nor did they have direct links or contacts with first responders prior to the deployment, nor did the many responders know they existed and/or who they were. In sum, they had all the odds stacked against them.

If the evaluation shows that the deployment and the Fletcher Team’s efforts didn’t save lives or are unlikely to have saved any lives, rescued people, had no impact, etc., none of us will dispute this. Will we give up? Of course not, Crowd Sorcerers don’t give up. We’ll learn and do better next time.

One of the main reasons for having this evaluation is not only to assess the impact of the deployment but to create a concrete list of lessons learned so that what didn’t work then is more likely to work in the future. The point here is to assess the impact just as much as it is to assess the potential added value of the approach for future deployments.

How can anyone innovate in a space riddled with a “No We Can’t, No We Won’t” mindset? Trial and error is not allowed, iterative learning and adaptation is as illegal as the dark arts. Some Muggles really need to read this post “On Technology and Learning, or Why the Wright Brothers Did Not Create the 747.” If die-Hard Muggles had had their way, they would have forced the brothers to close up shop after just their first attempt because it “failed.”

Incidentally, the majority of development, humanitarian, aid, etc., projects are never evaluated in any rigorous or meaningful way (if at all, even). But that’s ok because these are double (Muggle) standards.

On Communicating with Local Communities

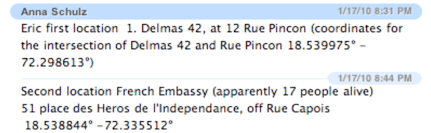

Concerns over security need not always be used as an excuse for not communicating with local communities. We need to find a way not to exclude potentially important informants. A little innovation and creative thinking wouldn’t hurt. Humanitarians working with Crowd Sorcerers could use SMS to crowdsource reports, triangulate as best as possible using manual means combined with Swift River, cross-reference with official information feeds and investigate reports that appear the most clustered and critical.

That way, if you see a significant number of text messages reporting the lack of water in an area of Port-au-Prince then at least this gives you an indication that something more serious may be happening in that location and you can cross-reference your other sources to check whether the issue has already been picked up. Again, it’s this clustering affect that can provide important insights on a given situation.

This would provide a mechanism to allow Haitians to report problems (or complaints for that matter) via SMS, phone, etc. Imogen Wall and other experienced humanitarians have long called for this to change. Hence the newly founded group Communicating with Disaster Affected Communities (CDAC).

Confusion to the End

Me: Despite what some Muggles may think, crowdsourcing is not actually magic. It’s just a methodology like any other, with advantages and disadvantages.

Muggle Master: That’s exactly what “Muggles” think.

Haha, well if that’s exactly what Muggles think, then this is yet more evidence of confusion in the land of Muggles. Crowdsourcing is just a methodology to collect information. There’s nothing new about non-probability sampling. Understanding the advantages and disadvantages of this methodology doesn’t require an advanced degree in statistical physics.

Muggle Master: Crowdsourcing should not form part of our disaster response plans because there are no guarantees that a crowd is going to show up. Crowdsourcing is no different from any other form of volunteer effort, and the reason why we have professional aid workers now is because, while volunteers are important, you can’t afford to make them the backbone of the operation. The technology is there and the support is welcome, but this is not the future of aid work.

This just reinforces what I’ve already observed, many in the humanitarian space are still confused about crowdsourcing. The crowd is always there. Haitians were always there. And crowdsourcing is not about volunteering. Again, crowdsourcing is just a methodology to collect information. When the UN does it’s rapid needs assessment does the crowd all of a sudden vanish into thin air? Of course not.

As for volunteers, the folks at Fletcher and SIPA are joining forces to work together on deploying live crisis mapping projects in the future. They’re setting up their own protocols, operating procedures, etc. based on what they’ve learned over the past 6 months in order to replicate the “surge mapping capacity” they demonstrated in response to Haiti and Chile. (Swift River will make the need for a large number of volunteers unnecessary).

And pray tell who in the world has ever said that volunteers should be the backbone of a humanitarian operation? Please, do tell. That would be a nice magic trick.

Muggle Master: “The technology is there and the support is welcome, but this is not the future of aid work.”

The support is welcome? Great! But who said that crowdsourcing was the future of aid work? It’s just a methodology. How can one sole methodology be the future of aid work?

I’ll close with this observation. The email thread that started this Crowd-Sorcerer series ended with a second email written by the same group that wrote the first. That second email was far more constructive and conducive to building bridges. I’m excited by the prospects expressed in this second email and really appreciate the positive tone and interest they expressed in working together. I definitely look forward to working with them and learning more from them as we proceed forward in this space and collaboration.