I’ve recently been cc’d on an email thread in which a humanitarian group has started to “air out some latent issues and frustrations” vis-a-vis the use of crowdsourcing in emergencies. I applaud them for speaking up and credit them for coining the fantastic term “crowd-sorcerers” which is brilliant! The group is apparently preparing to publish a report concerning Humanitarian Information Management in Haiti. I really hope to appear in their chapter on “The Crowd-Sorcerers.”

I too prefer candid conversations over diplomatic pillow talk. Lets be honest, it’s not actually difficult to disrupt the humanitarian system. It’s hierarchical, overly bureaucratic, slow, often unaccountable and at times spectacularly corrupt. But I want to make sure my tone here is not misunderstood. I want to be constructive but playful and provocative at the same time, to “lighten things” up a bit. We often take ourselves way too seriously, too often. That’s why I absolutely love the term Crowd-Sorcerer! Lets use Muggles for our humanitarian friends.

I’ll first lay out some of the frustrations aired by the Muggles in their own words so I don’t misrepresent their concerns—some of which are obviously valid (but not necessarily new). I’ll be reviewing these concerns in a series of blog posts, so stay tuned for future episodes in the new Crowd-Sorcerer Series! Caution: in case it’s not yet obvious, I will be deliberately provocative and playful in this series.

Muggles: Unless there are field personnel providing “ground truth” data, consumers will never have reliable information upon which to build decision support products. Crowdsourcing may be a quick way to get a message out, but it is not good information unless there is on-the-ground verification going on.

Not sure how you’d interpret these words but what they say to me is this: unless information comes from official field personnel, i.e., Muggles, it’s absolutely useless and should be dumped in the trash. I personally find that somewhat… is colonial too provocative?

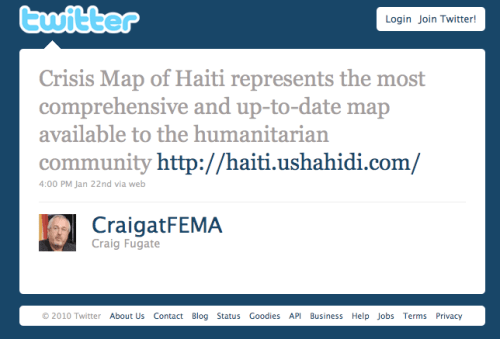

Crisis information that was crowdsourced using the distributed short code 4636 in Haiti helped save hundreds of lives according to the Marine Corps. The vast majority of this information could not be verified and yet both the Marine Corps and Coast Guard used this as one of their feeds while FEMA encouraged the crowd-sorcerers to continue mapping, calling the crisis map of Haiti the most comprehensive and up-to-date source of information available to Muggles.

There’s another extraordinary story here, and that’s the story of Mission 4636. Tens of thousands of incoming text messages from disaster affected communities in Haiti were translated from Haitian Kreyol to English in near real-time thanks to crowdsourcing. These text messages were translated by thousands of Haitian Kreyol speaking volunteers from all around the world.

Without this crowdsourcing, the Marine Corps, Coast Guard, FEMA and others could not have used the information streaming in from 4636 as effectively as they did. And guess what? The original platform that was used to do this translation-by-crowdsourcing was built overnight by Brian Herbert, a 20-something tech developer at Ushahidi.

Where were the Muggles then? I’m sorry to put it in these terms but if we listened to (and waited for) Muggles all the time, then perhaps several hundred more people would have needlessly lost their lives in Haiti.

A forthcoming USIP report that reviews the deployment of the Ushahidi platform found that Haitian NGO’s and local civil society groups were physically barred from entering LogBase—the humanitarian community’s compound near the airport in Port-au-Prince. One Haitian NGO rep who was interviewed said he felt like a foreigner in his own country when he wasn’t allowed to enter LogBase and attend meetings where he could share vital information on urgent needs.

Now tell me, how is trashing Haitian text messages any different than physically excluding Haitians from having a voice at LogBase? Because the so-called “unwashed masses” don’t have the “right” credentials as defined by the Muggles? Either way, they are excluded from having a stake in the hierarchical system that is supposed help them.

Incidentally, a fully independent evaluation led by a team of three accomplished experts in M&E (monitoring and evaluation) are currently carrying out their impact assessment of the Ushahidi deployment during the emergency period. They will be in Haiti to for the field work and yes, one member of the team speaks fluent Kreyol. The PI from Tulane University has over 20 years of relevant experience. It would make absolutely no sense for Ushahidi to carry out this review.

Ushahidi has little to no expertise in M&E and such a review would likely be viewed as biased if Ushahidi was authoring it. In fact, Ushahidi didn’t even commission the evaluation, The Fletcher Team did, and they should be applauded for doing so. By the way, as I have blogged here, it is misguided to assume that experts in, say development, are by definition experts at evaluating development projects. M&E is a separate area of expertise and profession in it’s own right. Anyone who has taken M&E 101 will know this from the first lecture.

We’re going to a commercial break now, but stay tuned for the next episode: “Here Come the Crowd-Sorcerers: Is it Possible to Teach an Old (Humanitarian) Dog New Tech’s?”