The etymology of the word “theory” is particularly interesting. The word originates from the ancient Greek; theoros means “spectator,” from thea “a view” + horan “to see.” In 1638, theory was used to describe “an explanation based on observation and reasoning.” How fitting that the etymologies of “theory” resonate with the purpose of crisis mapping.

But is there a formal theory of crisis mapping per se? There are little bits and pieces here and there, sprinkled across various disciplines, peer-reviewed journals and conference presentations. But I have yet to come across a “unified theory” of crisis mapping. This may be because the theory (or theories) are implicit and self-evident. Even so, there may be value in rendering the implicit—why we do crisis mapping—more visible.

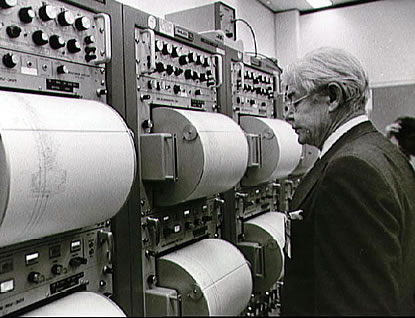

Crises occur in time and space. Yet our study of crises (and conflict in particular) has generally focused on identifying trends over time rather than over space. Why? Because unlike the field of disaster management, we do not have seismographs scattered around the planet that precisely pint point the source of escalating social tremors. This means that the bulk of our datasets describe conflict as an event happening in countries and years, not cities and days, let alone towns and hours.

This is starting to change thanks to several factors: political scientists are now painstakingly geo-referencing conflict data (example); natural language processing algorithms are increasingly able to extract time and place data from online media and user-generated content (example); and innovative crowdsourcing platforms are producing new geo-referenced conflict datasets (example).

In other words, we have access to more disaggregated data, which allows us to study conflict dynamics at a more appropriate scale. By the way, this stands in contrast to the “goal of the modern state [which] is to reduce the chaotic, disorderly, constantly changing social reality beneath it to something more closely resembling the administrative grid of its observations” (1). Instead of Seeing Like a State, crisis mapping corrects the myopic grid to give us The View from Below.

Crises are patterns; by this I mean that crises are not random. Military or militia tactics are not random either. There is a method to the madnes—the fog of war not withstanding. Peace is also a pattern. Crisis mapping gives us the opportunity to detect peace and conflict patterns at a finer temporal and spatial resolution than previously possible; a resolution that more closely reflects reality at the human scale.

Why do scientists increasingly build more sophisticated microscopes? So they can get more micro-level data that might explain patterns at a macro-scale. (I wonder whether this means we’ll get to a point where we cannot reconcile quantum conflict mechanics with the general theory of conflict relativity). But I digress.

Compare analog televisions with today’s high-definition digital televisions. The latter is a closer reflection of reality. Or picture a crystal clear lake on a fine Spring day. You peer over the water and see the pattern of rocks on the bottom of the lake. You also see a perfect reflection of the leaves on the trees by the lake shore. If the wind picks up, however, or if rain begins to fall, the water drops cause ripples (“noise” in the data) that prevent us from seeing the same patterns as clearly. Crisis mapping calms the waters.

Source: http://3.bp.blogspot.com

Keeping with the lake analogy, the ripples form certain patterns. Conflict is also the result of ripples in the socio-political fabric. The question is how to dampen or absorb the ripples without causing unintended ripples elsewhere? What kinds of new patterns might we generate to “cancel out” conflict patterns and amplify peaceful patterns? Thinking about patterns and anti-patterns in time and space may be a useful way to describe a theory of crisis mapping.

Some patterns may be more visible or detectable at certain temporal-spatial scales or resolutions than at others. Crisis mapping allows us to vary this scale freely; to see the Nazsca Lines of conflict from another perspective and at different altitudes. In short, crisis mapping allow us to escape the linear, two-dimensional world of Euclidean political science to see patterns that otherwise remain hidden.

In theory then, adding spatial data should improve the accuracy and explanatory power of conflict models. This should provide us with better and more rapid ways detect the patterns behind conflict ripples before they become warring tsunamis. But we need more rigorous and data-driven studies that demonstrate this theory in practice.

This is one theory of crisis mapping. Problem is, I have many others! There’s more to crisis mapping than modeling. In theory, crisis mapping should also provide better decision support, for example. Also, crisis mapping should theoretically be more conducive to tactical early response, not to mention monitoring & evaluation. Why? I’ll ramble on about that some other day. In the meantime, I’d be grateful for feedback on the above.