The Standby Volunteer Task Force (SBTF) was launched exactly a year ago tomorrow and what a ride it has been! It was on September 26, 2010, that I published the blog post below to begin rallying the first volunteers to the cause.

Some three hundred and sixty plus days later, no fewer than 621 volunteers have joined the SBTF. These amazing individuals are based in the following sixty plus countries, including: Afghanistan, Algeria, Argentina, Armenia, Australia, Belgium, Brazil, Canada, Chile, Colombia, Czech Republic, Denmark, Egypt, Finland, France, Germany, Ghana, Greece, Guam, Guatemala, Haiti, Hungary, India, Indonesia, Iran, Ireland, Israel, Italy, Japan, Jordan, Kenya, Republic of South Korea, Lebanon, Liberia, Libya, Mexico, Morocco, Nepal, Netherlands, New Zealand, Nigeria, Pakistan, Palestine, Peru, Philippines, Poland, Portugal, Senegal, Serbia, Singapore, Slovenia, Somalia, South Africa, Spain, Sudan, Switzerland, Tajikistan, Trinidad and Tobago, Tunisia, Turkey, Uganda, United Kingdom, United States and Venezuela.

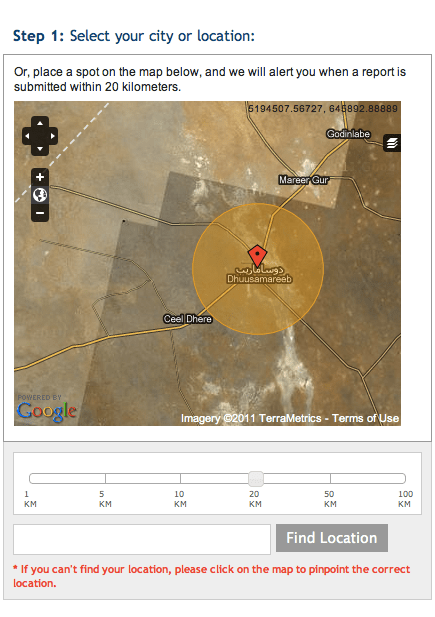

Most members have added themselves to the SBTF map below.

Between them, members of the SBTF represent several hundred organizations, including the American Red Cross, the American University in Cairo, Australia’s National University, Bertelsmann Foundation, Briceland Volunteer Fire Department, Brussels School of International Studies, Carter Center, Columbia University, Crisis Commons, Deloitte Consulting, Engineers without Borders, European Commission Joint Research Center, Fairfax County International Search & Rescue Team, Fire Department of NYC, Fletcher School, GIS Corps, Global Voices Online, Google, Government of Ontario, Grameen Development Services, Habitat for Humanity, Harvard Humanitarian Initiative, International Labor Organization, International Organization for Migration, John Carroll University, Johns Hopkins University, Lewis and Clark College, Lund University, Mercy Corps, Ministry of Agriculture and Forestry of New Zealand, Medecins Sans Frontieres, NASA, National Emergency Management Association, National Institute for Urban Search and Rescue, Nethope, New York University, OCHA, Open Geospatial Consortium, OpenStreetMap, OSCE, Pan American Health Organization, Portuguese Red Cross, Sahana Software Foundation, Save the Children, Sciences Po Paris, Skoll Foundation, School of Oriental and African Studies, Tallinn University, Tech Change, Tulane University, UC Berkeley, UN Volunteers, UNAIDS, UNDP Bangladesh, University of Algiers, University of Colorado, University of Portsmouth, UNOPS, Ushahidi-Liberia, WHO, World Bank and Yale University.

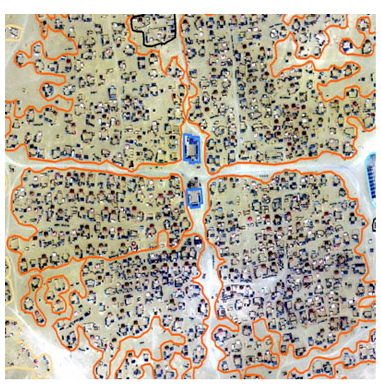

Over the past twelve months, major SBTF deployments have included the Colombia Disaster Simulation with UN OCHA Colombia, Sudan Vote Monitor, Cyclone Yasi, Christchurch Earthquake, Libya Crisis Map and the Alabama Tornado. SBTF volunteers were also involved in other projects in Mumbai, Khartoum, Somalia and Syria with partners such as UNHCR and AI-USA. The latter two saw the establishment of a brand new SBTF team, the Satellite Imagery Team, the eleventh team to joint the SBTF Group (see figure below). SBTF Coordinators organized and held several trainings for new members in 2011, as have our partners like the Humanitarian OpenStreetMap Team. You can learn more about all this (and join!) by visiting the SBTF blog.

We’re grateful to have been featured in the media on several occasions over the past year, documenting how we’re changing the world, one map at a time. CNN, UK Guardian, The Economist, Fast Company, IRIN News, Washington Post, Technology Review, PBS and NPR all covered our efforts. The SBTF has also been presented at numerous conferences such as TEDxSilicon Valley, The Skoll World Forum, Re:publica, ICRC Global Communications Forum, ESRI User Conference and Share Conference. But absolutely none of this would be possible without the inspiring dedication of SBTF members and Team Coordinators.

Indeed, were it not for them, the Libya Crisis Map that we launched for UN OCHA would have looked like this (as would all the other maps):

So this digital birthday cakes goes to every SBTF member who offered their time and thereby made what this global network is today, you all know who you are and have my sincere gratitude, respect and deep admiration. SBTF Coordinators and Core Team Members deserve very special thanks and recognition for the many, many extra days and indeed weeks they have committed to the SBTF. We are also most grateful to our partners, including Ning, UN OCHA-Geneva and OCHA-Colombia for their support, camaraderie and mentorship. So a big, big thank you to all and a very happy birthday, Mapsters! I look forward to the second candle!