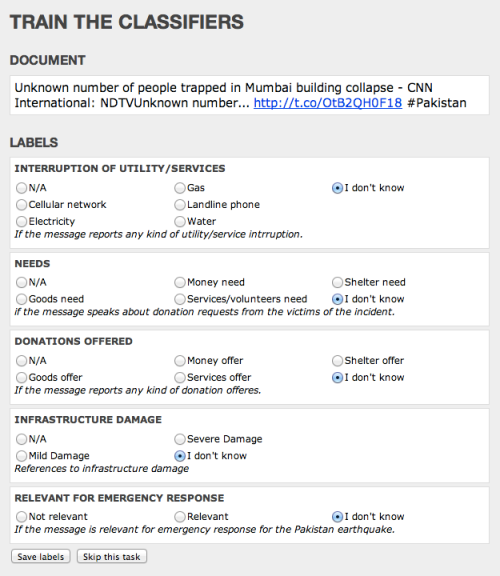

We have completed our digital humanitarian operation in the Philippines after five continuous days with MicroMappers. Many, many thanks to all volunteers from all around the world who donated their time by clicking on tweets and images coming from the Philippines. Our UN OCHA colleagues have confirmed that the results are being shared widely with their teams in the field and with other humanitarian organizations on the ground. More here.

In terms of preliminary figures (to be confirmed):

- Tweets collected during first 48 hours of landfall = ~230,000

- Tweets automatically filtered for relevancy/uniqueness = ~55,000

- Tweets clicked using the TweetClicker = ~ 30,000

- Relevant tweets triangulated using TweetClicker = ~3,800

- Triangulated tweets published on live Crisis Map = ~600

- Total clicks on TweetClicker = ~ 90,000

- Images clicked using the ImageClicker = ~ 5,000

- Relevant images triangulated using TweetClicker = ~1,200

- Triangulated images published on live Crisis Map = ~180

- Total clicks on ImageClicker = ~15,000

- Total clicks on MicroMappers (Image + Tweet Clickers) = ~105,000

Since each single tweet and image uploaded to the Clickers was clicked on by (at least) three individual volunteers for quality control purposes, the number of clicks is three times the total number of tweets and images uploaded to the respective clickers. In sum, digital humanitarian volunteers have clocked a grand total of ~105,000 clicks to support humanitarian operations in the Philippines.

While the media has largely focused on the technology angle of our digital humanitarian operation, the human story is for me the more powerful message. This operation succeeded because people cared. Those ~105,000 clicks did not magically happen. Each and every single one of them was clocked by humans, not machines. At one point, we had over 300 digital volunteers from the world over clicking away at the same time on the TweetClicker and more than 200 on the ImageClicker. This kind of active engagement by total strangers—good “digital Samaritans”—explains why I find the human angle of this story to be the most inspiring outcome of MicroMappers. “Crowdsourcing” is just a new term for the old saying “it takes a village,” and sometimes it takes a digital village to support humanitarian efforts on the ground.

Until recently, when disasters struck in faraway lands, we would watch the news on television wishing we could somehow help. That private wish—that innate human emotion—would perhaps translate into a donation. Today, not only can you donate cash to support those affected by disasters, you can also donate a few minutes of your time to support the operational humanitarian response on the ground by simply clicking on MicroMappers. In other words, you can translate your private wish into direct, online public action, which in turn translates into supporting offline collective action in the disaster-affected areas.

Clicking is so simple that anyone with Internet access can help. We had high schoolers in Qatar clicking away, fire officers in Belgium, graduate students in Boston, a retired couple in Kenya and young Filipinos clicking away. They all cared and took the time to try and help others, often from thousands of miles away. That is the kind of world I want to live in. So if you share this vision, then feel free to join the MicroMapper list-serve.

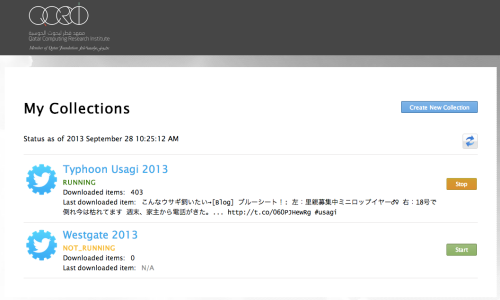

Considering that MicroMappers is still very much under development, we are all pleased with the results. There were of course many challenges; the most serious was the CrowdCrafting server which hosts our Clickers. Unfortunately, that server was not able to handle the load and traffic generated by digital volunteers. So their server crashed twice and also slowed our Clickers to a complete stop at least a dozen times during the past five days. At times, it would take 10-15 seconds for a new tweet or image to load, which was frustrating. We were also limited by the number of tweets and images we could upload at any given time, usually ~1,500 at most. Any larger load would seriously slow down the Clickers. So it is rather remarkable that digital volunteers managed to clock more than 100,000 clicks given the repeated interruptions.

Besides the server issue, the other main bottleneck was the geo-location of the ~30,000 tweets and ~5,000 images tagged using the Clickers. We do have a Tweet and Image GeoClicker but these were not slated to launch until next week at CrisisMappers 2013, which meant they weren’t ready for prime time. We’ll be sure to launch them soon. Once they are operational, we’ll be able to automatically push triangulated tweets and images from the Tweet and Image Clickers directly to the corresponding GeoClickers so volunteers can also aid humanitarian organizations by mapping important tweets and images directly.

There’s a lot more that we’ve learned throughout the past 5 days and much room for improvement. We have a long list of excellent suggestions and feedback from volunteers and partners that we’ll be going through starting tomorrow. The most important next step is to get a more powerful server that can handle a lot more load and traffic. We’re already taking action on that. I have no doubt that our clicks would have doubled without the server constraints.

For now, though, BIG thanks to the SBTF Team and in particular Jus McKinnon, the QCRI et al team, in particular Ji Lucas, Hemant Purohit and Andrew Ilyas for putting in very, very long hours, day in and day out on top of their full-time jobs and studies. And finally, BIG thanks to the World Wide Crowd, to all you who cared enough to click and support the relief operations in the Philippines. You are the heroes of this story.